LangChain Vs LlamaIndex: A Detailed Comparison

Comparing LangChain and LlamaIndex: A Comprehensive Overview

LangChain and LlamaIndex are advanced frameworks designed to enhance the capabilities of large language models (LLMs). LangChain focuses on building complex workflows and interactive applications, while LlamaIndex emphasizes seamless data integration and dynamic data management.

This article provides a comprehensive comparison between these two frameworks, exploring their unique features, tools, and ecosystems. Detailed sections cover LangChain’s definition, core features, tools, and ecosystem, followed by a similar examination of LlamaIndex. Additionally, a dedicated section compares the code implementations of both frameworks, highlighting their differences in approach and functionality.

Finally, the article summarizes the main distinctions between LangChain and LlamaIndex, offering insights into their respective strengths and suitable use cases, and guiding developers and data scientists in selecting the right framework for their specific needs.

Table of Contents:

LangChain

1.1. What is LangChain?

1.2. LangChain Features

1.3. LangChain Tools

1.4. LangChain EcoSystem

2. LlamaIndex

2.1. What is LlamaIndex?

2.2. LlamaIndex Main Features

2.3. LlamaIndex Tools

Summary of Code Comparison

3. Code Implementation Comparison Between LangChain and LlamaIndex

4. Summary of Main Differences

My E-book: Data Science Portfolio for Success Is Out!

I recently published my first e-book Data Science Portfolio for Success which is a practical guide on how to build your data science portfolio. The book covers the following topics: The Importance of Having a Portfolio as a Data Scientist How to Build a Data Science Portfolio That Will Land You a Job?

1. LangChain

1.1. What is LangChain?

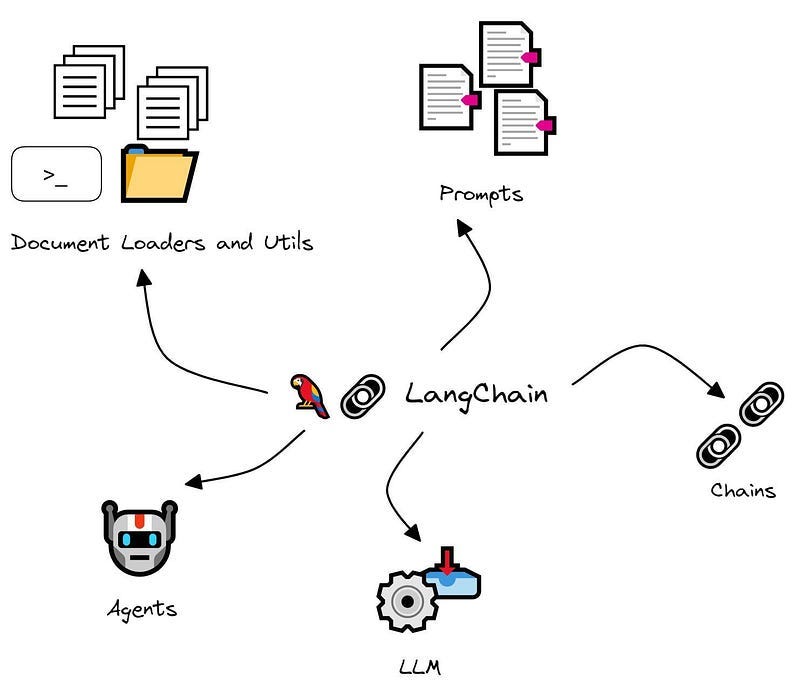

LangChain is a framework designed to facilitate the development of applications powered by language models. It offers a robust toolkit for creating and managing workflows that integrate various components, such as language models, data sources, and user interfaces.

The primary goal of LangChain is to streamline the development process of applications that leverage natural language processing (NLP) capabilities, making it easier for developers to build complex, interactive, and intelligent systems.

1.2. LangChain Main Features

Key features and components of LangChain include:

Chain of Components: LangChain allows developers to create chains of processing steps, where each step can be a different NLP model or function. These chains can be customized to handle a variety of tasks, such as data preprocessing, model inference, and post-processing.

Modularity: The framework is modular, meaning that individual components can be easily replaced or updated without affecting the entire workflow. This modularity supports experimentation and optimization.

Data Integration: LangChain supports integration with various data sources, allowing applications to pull in data from databases, APIs, and other external sources. This capability is essential for building data-driven applications.

Interactivity: It provides tools to create interactive applications, such as chatbots or virtual assistants, that can engage with users in real-time. These tools handle user input, manage context, and generate appropriate responses.

Scalability: LangChain is designed to handle large-scale applications and can be deployed in cloud environments. This scalability ensures that applications can manage high volumes of data and user interactions.

Ease of Use: By abstracting many of the complexities involved in working with language models, LangChain makes it easier for developers, including those without deep expertise in NLP, to build powerful applications.

1.3. LangChain Tools

LangChain’s tools include Model I/O, Retrieval, Chains, Memory, and Agents. Each tool is explained in detail below:

3.1. Model I/O

At the heart of LangChain’s capabilities lies Model I/O (Input/Output), a crucial component for leveraging the potential of large language models (LLMs). This feature offers developers a standardized and user-friendly interface to interact with LLMs, simplifying the creation of LLM-powered applications to address real-world challenges.

Model I/O handles the complexities of input formatting and output parsing, allowing developers to focus on building effective and efficient solutions.

3.2. Retrieval

In many LLM applications, it is necessary to incorporate personalized data that extends beyond the models’ original training scope. This is achieved through Retrieval Augmented Generation (RAG).

RAG involves fetching external data and supplying it to the LLM during the generation process. This approach ensures that the language model can generate more accurate and contextually relevant responses by leveraging up-to-date and specific information from external sources.

3.3. Chains

While standalone LLMs may suffice for simple tasks, complex applications often demand the intricacy of chaining LLMs together or integrating them with other essential components. LangChain offers two overarching frameworks for this process: the traditional Chain interface and the modern LangChain Expression Language (LCEL).

LCEL is particularly powerful for composing chains in new applications, providing a flexible and expressive syntax. However, LangChain also offers pre-built Chains, ensuring that both frameworks can coexist seamlessly, catering to various development needs.

3.4. Memory

Memory in LangChain refers to the capability to store and recall past interactions. This feature is crucial for creating applications that require contextual awareness and continuity. LangChain provides various tools to integrate memory into your systems, catering to both simple and complex needs.

Memory can be seamlessly incorporated into chains, allowing them to read from and write to stored data. The information held in memory guides LangChain Chains, enhancing their responses by drawing on past interactions and improving the overall user experience.

3.5. Agents

Agents are dynamic entities within LangChain that utilize the reasoning capabilities of LLMs to determine the sequence of actions in real time. Unlike conventional chains, where the sequence of operations is predefined in the code, Agents leverage the intelligence of language models to decide the next steps and their order dynamically.

This makes them highly adaptable and powerful for orchestrating complex tasks, as they can adjust their actions based on the application's context and evolving needs.

1.4. LangChain EcoSystem

The LangChain ecosystem comprises the following key components:

LangSmith: LangSmith assists in tracing and evaluating your language model applications and intelligent agents. It supports the transition from prototype to production, ensuring robust and reliable deployments.

LangGraph: LangGraph is a powerful tool for building stateful, multi-actor applications with LLMs. It leverages LangChain primitives, providing an advanced framework for developing complex, interactive applications.

LangServe: LangServe enables the deployment of LangChain runnables and chains as REST APIs. This tool simplifies making your LangChain applications accessible over the web, facilitating easy integration with

other systems and services.

Keep reading with a 7-day free trial

Subscribe to To Data & Beyond to keep reading this post and get 7 days of free access to the full post archives.